Are you curious about how AI learns from its experiences, much like a child learning to ride a bike through trial and error? Have you ever wondered how reinforcement learning (RL) shapes the intelligence of artificial agents, guiding them to make better decisions over time?

In this blog, we’ll demystify reinforcement learning, dissect its core principles, explore its practical applications, and its potential impact across industries. Our aim? To offer an engaging and insightful roadmap for those new to AI or seeking deeper insights into reinforcement learning.

What’s Reinforcement Learning (RL)?

Reinforcement learning stands as a branch of machine learning that empowers AI agents to refine their decision-making abilities through interactions with their surroundings. Here, an agent navigates its environment, taking actions and receiving feedback in the form of rewards or penalties. This feedback steers the agent toward optimal decision-making to achieve its objectives.

Within machine learning, three main types exist: supervised learning, unsupervised learning, and reinforcement learning.

While supervised learning hinges on labeled datasets for AI learning and unsupervised learning uncovers patterns in unlabeled data, reinforcement learning hones AI decision-making through real-time environmental interaction.

Key Reinforcement Learning Components

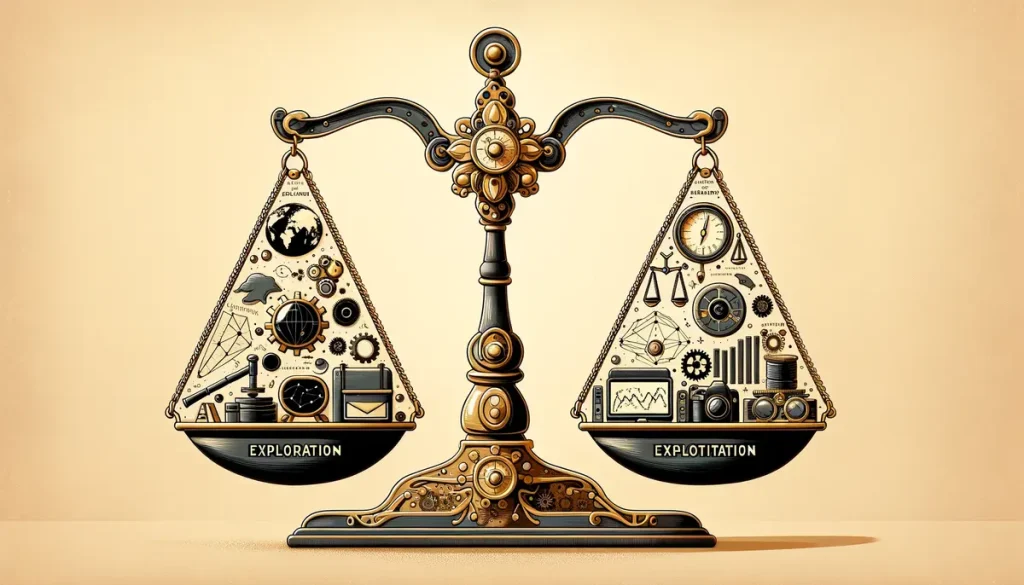

Exploration vs. Exploitation:

- Exploration and exploitation are two fundamental strategies that the agent employs to navigate its environment and learn from experiences.

- Exploration involves trying out new actions and strategies to gather information about the environment and discover potentially better solutions.

- Exploitation, on the other hand, entails leveraging the agent’s existing knowledge and experience to select actions that are known to yield high rewards.

- Striking a balance between exploration and exploitation is crucial for effective learning. Too much exploration may lead to inefficient use of resources and prolonged learning times, while excessive exploitation may cause the agent to settle for suboptimal solutions without exploring better alternatives.

- Reinforcement learning algorithms incorporate mechanisms to manage the exploration-exploitation trade-off, ensuring that the agent learns efficiently while maximizing long-term rewards.

Learning Algorithms

- Reinforcement learning algorithms play a pivotal role in enabling agents to learn from experiences and improve their decision-making abilities over time.

- Various algorithms exist, each with its unique strengths, weaknesses, and suitability for different types of problems and applications.

- Q-Learning is one of the earliest and most widely used reinforcement learning algorithms. It involves learning the values of state-action pairs, known as Q-values, to guide decision-making.

- Deep Q-Networks (DQN) leverage deep neural networks to approximate Q-values, allowing for more complex and high-dimensional state and action spaces.

- Proximal Policy Optimization (PPO) is a policy optimization algorithm that directly learns a parameterized policy, optimizing it to maximize rewards while ensuring stable learning.

- These algorithms, along with many others, form the backbone of reinforcement learning research and applications, driving advancements in AI across various domains.

Training and Convergence:

- Training an RL agent involves iteratively interacting with the environment, taking actions, and receiving rewards or feedback.

- As the agent explores its environment and receives feedback, it adjusts its policy or value function to improve its decision-making abilities.

- Over time, through repeated interactions and learning updates, the agent’s policy converges towards an optimal solution that maximizes long-term rewards.

- Convergence refers to the process by which the agent’s policy stabilizes, indicating that it has learned an effective strategy for achieving its goals in the given environment.

- Training and convergence are iterative processes, requiring the agent to continually refine its strategies based on new experiences and feedback from the environment.

- Reinforcement learning practitioners and researchers employ various techniques to facilitate training and ensure convergence, such as adjusting learning rates, utilizing experience replay, and implementing exploration strategies like epsilon-greedy policies.

Real-World Applications of Reinforcement Learning

- Gaming: RL has propelled AI agents to excel in games like Go, Chess, and Poker. Examples include Google DeepMind’s AlphaGo and OpenAI’s Five, surpassing human world champions in their respective games.

- Robotics: RL plays a pivotal role in robotic control and navigation, facilitating learning of complex tasks such as object grasping, walking, and flight. Through trial and error, robots adapt to diverse environments and scenarios more efficiently.

- Finance: RL aids trading algorithms and portfolio management, enhancing financial institutions’ decision-making by analyzing historical data and adapting to market dynamics.

- Healthcare: RL holds immense potential in enhancing medical diagnostics and treatment planning. For instance, RL algorithms optimize treatment plans for cancer patients, maximizing therapy effectiveness while minimizing side effects.

Challenges and Limitations of Reinforcement Learning in AI Education

- Sparse Rewards: In certain environments, feedback is sporadic or challenging to obtain, impeding effective learning for the agent. Researchers strive to develop algorithms adept at handling sparse rewards to enhance AI education efficiency.

- Exploration vs. Exploitation Trade-off: Balancing exploration and exploitation poses a fundamental challenge in reinforcement learning. Designing algorithms capable of navigating this trade-off effectively remains an active area of research.

- Scalability: Reinforcement learning can be computationally demanding, particularly for complex environments and extensive state-action spaces. Developing more efficient algorithms and leveraging parallel computing resources are vital for scaling RL to tackle real-world challenges.

The Future of Teaching AI Through Reinforcement Learning

Current RL research trends encompass hierarchical reinforcement learning, multi-agent systems, and inverse reinforcement learning. These approaches aim to bolster RL algorithm capabilities and address existing challenges.

As AI systems pervade our lives, addressing potential ethical concerns surrounding AI decision-making, such as fairness, transparency, and accountability, becomes paramount.

Conclusion

As we conclude our exploration of reinforcement learning, one thing becomes abundantly clear: its potential to revolutionize industries knows no bounds. From gaming and robotics to finance and healthcare, RL stands as a beacon of innovation, offering endless possibilities for AI advancement. By understanding its core principles and staying attuned to emerging trends, we can harness the power of reinforcement learning to shape a future where intelligent machines enhance our lives and drive progress. So let’s embrace the journey ahead, armed with knowledge and a vision for a brighter tomorrow.